Much like the post on Appium; This Espresso post is tied to activities going on at work.

This isn't published until long after a decision has been made; but it will serve as my learning so I can participate in the discussions with more knowledge. Which is 90% the whole point of my blogging this stuff.

Philosophy

There's a few more philosophical views on Unit Testing that all tie into UI testing. These don't make the discussion any easier.

When Unit Testing, UI in particular, there's the white box vs black box approach. Do I know and control the system or do I solely interact with it. This is a discussion right along doing functional or integration tests.

The last philosophical point is time. How long should unit tests take. The general consensus; and requirement of TDD is "quickly". If you're doing UI tests on an emulator with a lot of tearing up and down - There's nothing quick right now. It's the state of the world; much like 20 years ago when IDE's didn't have any refactoring support. Try to find one now that doesn't have the ability to rename all references to something.

Time

Let's address the time one first.

<ide rant>

UI Tests are slow - Who cares if they are in the IDE? This is a point that's been brought up against Appium for Android development testing. UI tests won't; and shouldn't; be run as frequently as unit tests. They just can't be and have an effective developer. They are supposed to test the UI; the user interaction; Not the logic. This is a part of the drive to minimize (with a hard line approach) logic in the UI layer that is; essentially; un-TDD-able that brought about the View-Bridge-Mediator

I say this; and I TDD'd the Appium test; but it wasn't a smooth process. It's a fragile test that's going to be thrown away; exactly like the tests that would be co-located in the IDE.

I am a fan of a consolidation of development windows; but it's a small thing compared to using the right tool.

</ide rant>

Now onto time - If we want to be effective; our Red-Green-Refactor cycle must be as quick as possible. Emulators or Device testing is currently too slow. We can't utilize these as tools during coding. They are occasional and build time tests.

Once these are excluded from being run 'constantly'; we will run them on the build system. If you have a robust suite of UI tests (which is possibly a smell since they are fragile) the build is going to take a long time. This prevents knowing if the build broke for 'a long time'. This isn't really acceptable.

What I've done in the past is have subsequent builds triggered.

The build triggered from the commit only runs the unit tests. Once it passes; it triggers a build that does all the long running validation; static code analysis; ui tests; whatever. In a continuous deployment environment; the passing of SCA/UI would trigger the final build process... which not really building; just deploying, or delivering an artifact.

It's an unfortunate state of UI testing that it isn't quick; but it can force better design and separation of concerns.

Functional vs Integration

White Box vs Black Box

This is where my big difference between Appium and Espresso comes into play. Appium is black box testing. Espresso is white box testing. YES - You can do black box out of Espresso (or any time you have white box) but it's easy to slip into white box and not notice.

This is the philosophical discussion; with Appium and black box testing; you are forced to do Functional tests (using actual resources). With Espresso; you can do black box Integration tests where the network responses are faked (for example). This is what I'm going to do with this post.

I think it's an excellent way to verify behavior with known data. I'm not a fan of checking known values; but rather that I know I've put 20 items in a list; does it scroll; can I get a count.

If there are sections; maybe validate a sub-item with the category. Not sure; haven't done a lot. But only interact with the UI in a fashion a user would.

Which when not confined to that; it's hard to do. It's a pain when you can just get the control directly, like in Espresso.

I think it's a core aspect of the choice to know if you want white or black box testing. If your UI is devoid of logic; then I'm hard pressed to find a compelling reason to white box it.

These tests then serve a dual purpose (OMG S.R.P) of being functional tests. They're guaranteed to be hitting the network and available end points; real resources; real world effects and could bring to light other hidden issues if left to purely whitebox testing. This was demonstrated in the post on Appium when I found I'd wired up the Retrofit interface incorrectly.

Onto the Code

I think that's enough philosiophying; or rambling - take your pic. Let's get some ESPRESSO UP IN HERE!!! ... then I'll write a unit test.

Getting it working

I've barely done espresso before. Most of my early attempts involved Robolectric's UI test functionality. Since I'm new to it; let's hit up the android docs on espresso testing.

An interesting thing; and an issue I expected to encounter; is that the docs suggest turning animations off.

Turn off animations on your test device — leaving system animations turned on in the test device might cause unexpected results or may lead your test to fail.

That leaves a pending question of - How are animations tested? I'll (maybe) research that another day.

Following the docs to set up espresso; we've added

androidTestCompile 'com.android.support.test.espresso:espresso-core:2.2.2'

to the app's gradle file.

Which then caused an error; so I also had to force the latest support-annotations to be used by adding

androidTestCompile 'com.android.support:support-annotations:25.1.0'

Note: As ruthless refactoring is a part of the process; things may get changed without a lot of callout. For example; I added a new package for the UI layer we already had. I didn't like them hanging out there.

Instead of trying to hard code everything right away; I'm gonna see what the Espresso Test Recorder gets me.

This is a pretty hefty advantage over appium.

It Compiles

Neato - I was having trouble getting the emulator to run - Docker and the Android Emulator weren't playing nice. Just a note if the emulator pops up the 'loading' screen then vanishes.

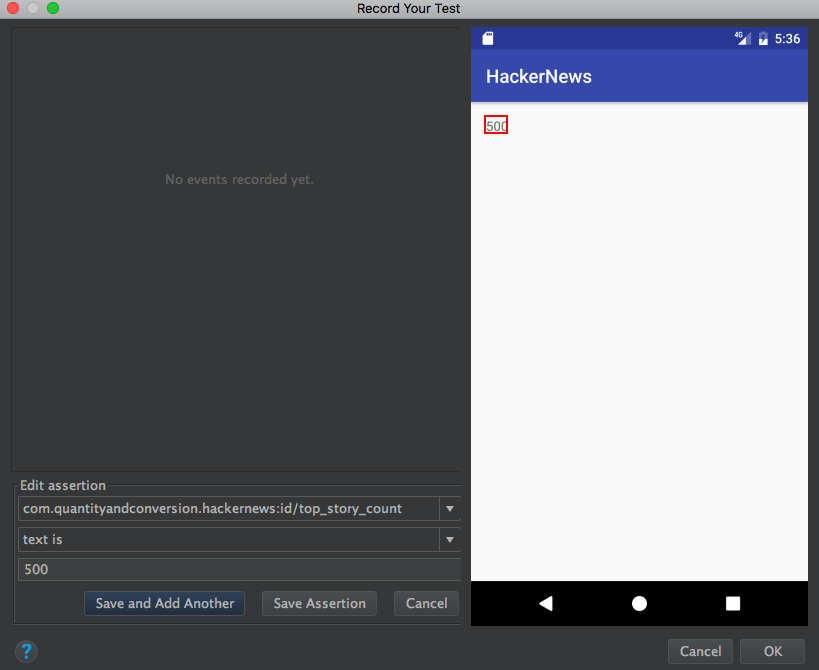

It Records

Got the screen recorder running

and added an assertion for the text

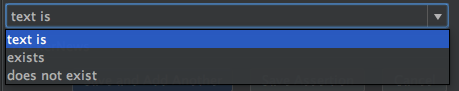

The options where it says text is doesn't have many options - maybe because it's just a text view.

Once you're done; you click finish and it will produce the class to do the test for you.

The resulting code concerns me for longevity of doing UI tests. Though; I have zero doubt that generated code for UI tests will be fragile as hell... The other side is that if you record it then it works for that UI. It's fragile; but also quick to replace.

Here's the main code for the generated test

@Test

public void mainActivityTest() {

ViewInteraction textView = onView(

allOf(withId(R.id.top_story_count), withText("500"),

childAtPosition(

allOf(withId(R.id.activity_main),

childAtPosition(

withId(android.R.id.content),

0)),

0),

isDisplayed()));

textView.check(matches(withText("500")));

}

I have to modify it to get it to check the same thing; that it's a number. It might not always be 500...

With this; yes - The tests will work. Yes it's a lot quicker to setup and run. It's also going to be much harder to build non-throwaway tests.

If we look at throwing away tests; instead of attempting to build them in a fashion that tests behavior and ignores values and implementation; then it will be less fragile. As we can see in the above test; it has two app.R.id values for this test. Change the layout; but not behavior or anything else - Test is broken. This could veyr well be a prickly point; as I'll happily say that if the UI element changes ID; then the test needs to be updated. But if the layout changes, but the control being tested is the same id; then the test shouldn't break. I assume the whole reason for keeping the same name is so the code wouldn't break; why should the test?

If we treat UI tests as throw away; then we'll never actually know if it broke; because we'll just delete it and record a new one. There will be no shared code; no patterns or practices developed.

The Espresso Test Recorder is a detriment to effective UI testing.

Of course; all things in moderation. I'd use the hell out of this tool to figure out ways to do things; and then custom code it.

The fact it also puts this private method into the class

private static Matcher<View> childAtPosition(

final Matcher<View> parentMatcher, final int position) {

return new TypeSafeMatcher<View>() {

@Override

public void describeTo(Description description) {

description.appendText("Child at position " + position + " in parent ");

parentMatcher.describeTo(description);

}

@Override

public boolean matchesSafely(View view) {

ViewParent parent = view.getParent();

return parent instanceof ViewGroup && parentMatcher.matches(parent)

&& view.equals(((ViewGroup) parent).getChildAt(position));

}

};

}

kinda baffles me. Seems like that'd be a repeating chunk of code... Possibly useful in a library?

At the moment I don't know how to get the value out of the check box. I can match it's text value... but I don't care about it's ACTUAL VALUE... I just want to see if it's an number; not which number.

I'm confident there's a way to extract the value; but currently; if I strip the test down to

@Test

public void mainActivityTest() {

ViewInteraction textView = onView(withId(R.id.top_story_count));

textView.check(matches(withText("500")));

}

it fails as it doesn't wait for the text to change. That was the importance of the withText("500") in finding the view; it was waiting for that value to show up. This is poor form; only viable for an integration test.

We need to see how to pause... which is discovered in this StackOverflow.

Setting that up (a nice little library class); we get a UI that pauses long enough for network activity to update the value.

Now the test looks like

@Test

public void mainActivityTest() {

ViewInteraction textView = onView(withId(R.id.top_story_count));

onView(isRoot()).perform(waitFor(1000));

textView.check(matches(withText("500")));

}

Plus a library helper method/class. Seems a lot cleaner than the crap the recorder put out.

If I wanted to create a helper to make sure a value was an int; I probably could... Seems strange; but I'll let it slide.

I said I'd do the integration, not functional; so let's get to that.

Functional

After importing the classes I have for faking the network... I'm not sure I can... And now I realize I can't.

It's a little bit of a mental snafu how the espresso tests are run. They are a separate app that interacts with the main app. Unless I create specific hooks to update the networking inside the app; it's a no go. I can't fake the network.

This forces Espresso to also be functional tests. Cool. I still get iffy on the level of knowledge it has of the make up of the app.

Summary

In the end; I prefer Appium given that it forces a mental disconnect from the app. It's testing the user interaction with the app. Espresso tests the user interface is what you expect. Espresso let's you know too much about the app; check the implementation aspects.

I favor Appium; I recognize this. I can confidently write tests in either; but I think appium provides a better mental distinction that will allow more effective and less fragile tests.

The code at the end of this post is available here